Lip Language Translator Using TENGs

Table of Contents

Introduction

Lip language is an important skill for people who rely on voice off communication. People with vocal cord lesions, laryngeal and lingual injuries communicate with lip language. This can be a life changing skill for people in need, though it might not be a priority for others. To cover this skill gap, a team of scientists from the USA and China, came up with a self-powered and economical technology with triboelectric sensors. These sensors are trained using a neural network, which reach a test accuracy of 94.5% after being trained 20 classes with 100 samples each. This is done by collecting lip motions of selected words, vowels, phrases and silent speech. This research is not just a scientific achievement but also an philanthropic service, which enhances the life of people and their communication.

For starters, excellent research is performed in lip language recognition, silent speech interface, system enabling speech including magnet based solutions, vision based solutions, Ultrasound based solution, inaudible acoustic based solution and surface electromyography based solutions. Rapid advancements in machine learning and deep learning enables recognition to algorithms which aren’t conventional.

The method in discussion can suffer from facial angles, light intensity, head shaking and blocking objects. Vision based failures are quite prevalent in this method. To overcome this issue a non-invasive contact sensor that captures the movement of the muscles can be an alternative to the vision detection sensors. Triboelectric nanogenerators (TENGs) gained traction for the same reason. They have the ability to convert tiny mechanical energy to electricity using electrostatic induction. This makes TENGs an economical and self powered sensor.

The researcher’s propose a Lip-language decoding system (LLDS), which has the ability to capture motion of mouth muscles with the help of a flexible, economical, self-powered sensors and process the signals using deep learning classifier. These sensors in discussion are placed in the mouth muscles using polymer films to improve the skin sensation.

Design of LLDS & TENGs

`

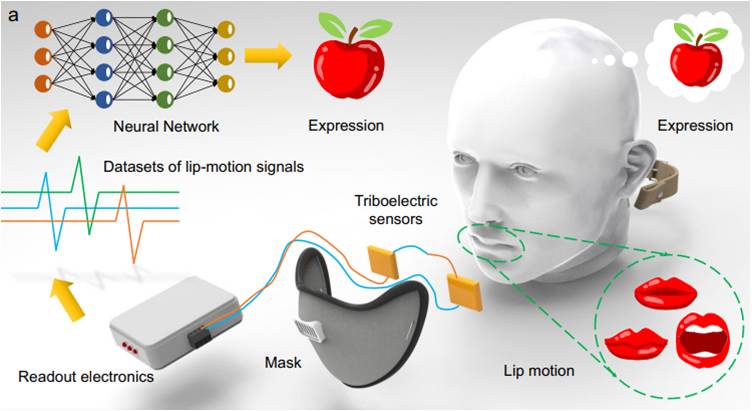

Fig 1 LLD workflow representation

The proposed system comprises of triboelectric sensor, fixing mask, readout electronics and neural network classifiers. The flexible triboelectric sensors are placed at the junction of orbicularis oris, depressor anguli oris, risorius, zygomaticus and buccinator to capture muscle motions accurately. This sensor will detect the movement of lips and generate electric signals based on the movement. These signals will be transferred to a trained neural network which will run and predict the vocabulary.

Characteristics of TENGs

The electrical characteristics of TENGs play a vital role in the development of this device. These characteristics can be estimated by the application of force, frequency, sensor size and the structure of electrical signals. Electrical signals generated by various materials is also studied carefully to optimize the materials used in the hardware. Paper, Polyethylene terephthalate, Poly tetra fluoroethylene, Polyimide, Polyvinyl chloride (PVC), Fluorinated ethylene propylene (FEP) are contacted with nylon. These recordings were captured using a 20 X 20 X 5 mm3 sensor with a force of 5N at 2Hz frequency. Based on the above readings, PVC and FEP exhibited better output performance with nylon. Similar experiments were done with variable forces at different frequencies. Detailed results and discussions are included in the reference [1].

In brief the observations drawn from these experiments are: 1) Increasing sensor area will help in obtaining better results. 2) The optimum thickness for the sensor is found to be around 2 mm. 3) Voltage signals observed in series connection are higher when compared to parallel connection. 4) The impact of artificial sweat on the hardware is found to be minimal.

Electrical signals from mouth motion Vs sound signals

Two types of signals are typically observed when generated from mouth shape during vocalization. One is a waveform signal related to the pronunciation of vowels while the other is a waveform signal for words and phrases. Amplitude interference is removed with the help of normalization to highlight the difference between both the signal waveforms.

Fig 2 Normalized voltage Vs Mouth shape

Additional info related to the differentiation of the signals generated by words and phrases are present in [1] with examples.

Neural network Classifier

Deep learning algorithms are a vital part of this project considering the dynamic nature of the application. Huge amounts of data is required to train the proposed deep learning algorithms. Dilated recurrent neural network models are used to overcome this requirement. This process comes into action by categorizing the overall problem statement into different modules. A deep learning prototype is then build for every module.

Fig 3 Deep learning process flow chart

Conclusion

The idea proposed is a methodology to investigate the lip-language interpretation system. Considered literature focused on the hardware and the deep learning algorithm that is utilized. This research is focused on providing better social lifestyle to the vocally impaired. It can be considered as a testament to the proper utilization of science and technology and a benchmark for research aspirants.

References

- Decoding lip language using triboelectric sensors with deep learning, Yijia Lu, Han Tian, Jia Cheng, Fei Zhu, Bin Liu, Shanshan Wei , Linhong Ji1 & Zhong Lin Wang, https://www.nature.com/articles/s41467-022-29083-0

Be the first to comment